EPiQC Students Present Quantum Computing Research at ASPLOS 2019

Two new approaches for bringing quantum computers closer to practical reality were presented at the prestigious ASPLOS conference in Providence, RI last week by students and researchers from the EPiQC (Enabling Practical-scale Quantum Computation) collaboration, an NSF Expedition led by UChicago Prof. Fred Chong. The annual ASPLOS (Architectural Support for Programming Languages and Operating Systems) conference is “the premier forum for multidisciplinary systems research spanning computer architecture and hardware, programming languages and compilers, operating systems and networking.”

The EPiQC papers described a new compiler approach that combines insights from physics and computer science to speed up quantum programs by as much as tenfold, and a technique using noise data from quantum hardware to increase reliablity on today's error-prone machines. Both projects support the mission of EPiQC to bridge the gap from existing theoretical algorithms to practical quantum computing architectures on near-term devices.

Quantum Optimal Control Compiler Provides Speed Boost to Quantum Computers

The first paper, presented at the conference by graduate student Yunong Shi, adapts quantum optimal control, an approach developed by physicists long before quantum computing was possible. Quantum optimal control fine-tunes the control knobs of quantum systems in order to continuously drive particles to desired quantum states — or in a computing context, implement a desired program. If successfully adapted, quantum optimal control would allow quantum computers to execute programs at the highest possible efficiency…but that comes with a performance tradeoff.

“Physicists have actually been using quantum optimal control to manipulate small systems for many years, but the issue is that their approach doesn’t scale,” Shi said.

Even with cutting-edge hardware, it takes several hours to run quantum optimal control targeted to a machine with just 10 quantum bits (qubits). Moreover, this running time scales exponentially, which makes quantum optimal control untenable for the 20-100 qubit machines expected in the coming year.

Meanwhile, computer scientists have developed their own methods for compiling quantum programs down to the control knobs of quantum hardware. The computer science approach has the advantage of scalability — compilers can easily compile programs for machines with thousands of qubits. However, these compilers are largely unaware of the underlying quantum hardware. Often, there is a severe mismatch between the quantum operations that the software deals with versus the ones that the hardware executes. As a result, the compiled programs are inefficient.

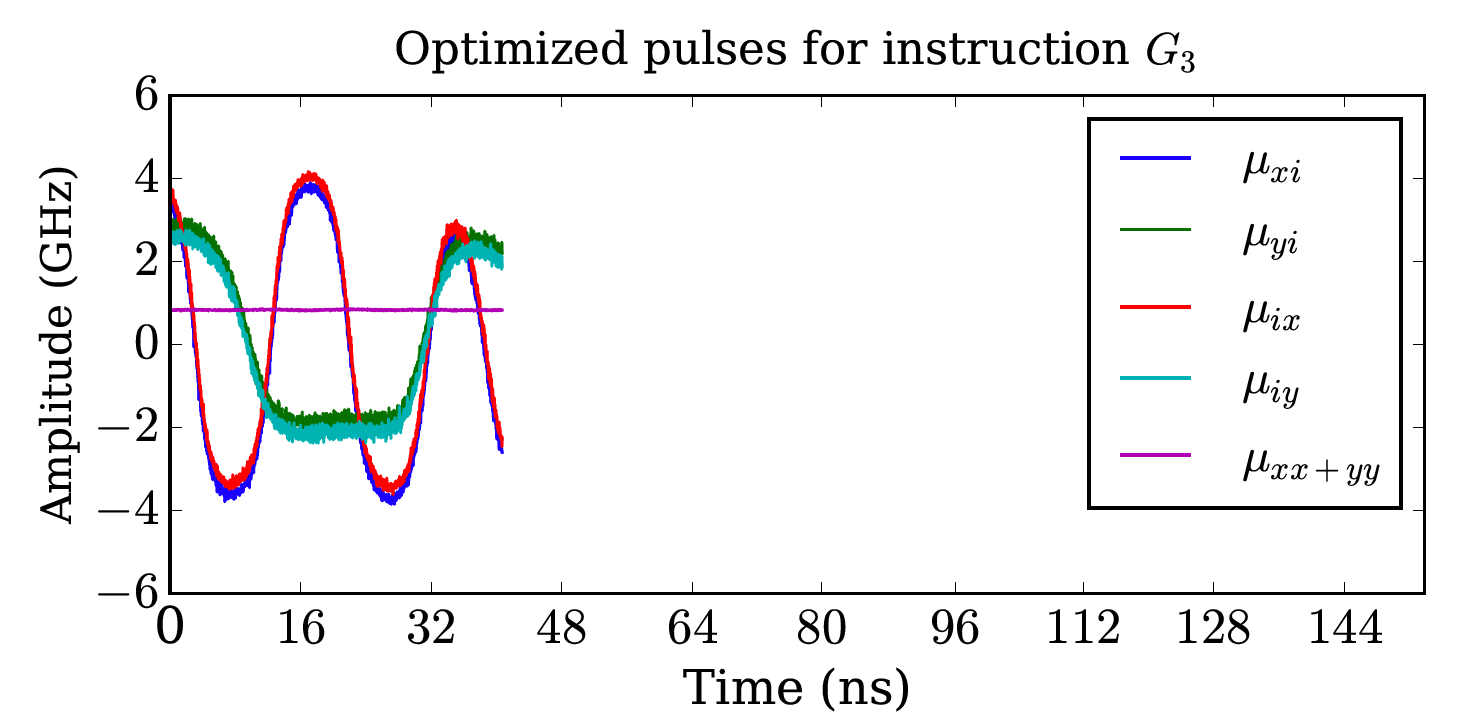

The EPiQC team’s work merges the computer science and physics approaches by intelligently splitting large quantum programs into subprograms. Each subprogram is small enough that it can be handled by the physics approach of quantum optimal control, without running into performance issues. This approach realizes both the program-level scalability of traditional compilers from the computer science world and the subprogram-level efficiency gains of quantum optimal control.

The intelligent generation of subprograms is driven by an algorithm for exploiting commutativity — a phenomenon in which quantum operations can be rearranged in any order. Across a wide range of quantum algorithms, relevant both in the near-term and long-term, the EPiQC team’s compiler achieves two to ten times execution speedups over the baseline. But due to the fragility of qubits, the speedups in quantum program execution translate to exponentially higher success rates for the ultimate computation. As Shi emphasizes, “on quantum computers, speeding up your execution time is do-or-die.”

“Past compilers for quantum programs have been modeled after compilers for modern conventional computers,” said Chong, Seymour Goodman Professor of Computer Science at UChicago and lead PI for EPiQC. But unlike conventional computers, quantum computers are notoriously fragile and noisy, so techniques optimized for conventional computers don’t port well to quantum computers. “Our new compiler is unlike the previous set of classically-inspired compilers because it breaks the abstraction barrier between quantum algorithms and quantum hardware, which leads to greater efficiency at the cost of having a more complex compiler.”

Using Noise Data to Increase Reliability of Quantum Computers

The other study, a collaboration between EPiQC researchers and IBM presented at ASPLOS by Princeton graduate student Prakash Murali, repurposes the quantum computer's reputation for noisiness into a new technique that improves the reliability of operations on real hardware. The quantum computers of today and the next 5-10 years are limited by noisy operations, where the quantum computing gate operations produce inaccuracies and errors. While executing a program, these errors accumulate and potentially lead to wrong answers.

To offset these errors, users run quantum programs thousands of times and select the most frequent answer as the correct answer. The frequency of this answer is called the success rate of the program. In an ideal quantum computer, this success rate would be 100% — every run on the hardware would produce the same answer. However, in practice, success rates are much less than 100% because of noisy operations.

The researchers observed that on real hardware, such as the 16-qubit IBM system, the error rates of quantum operations have very large variations across the different hardware resources (qubits/gates) in the system. These error rates can also vary from day to day. The researchers found that operation error rates can have up to 9 times as much variation depending upon the time and location of the operation. When a program is run on this machine, the hardware qubits chosen for the run determine the success rate.

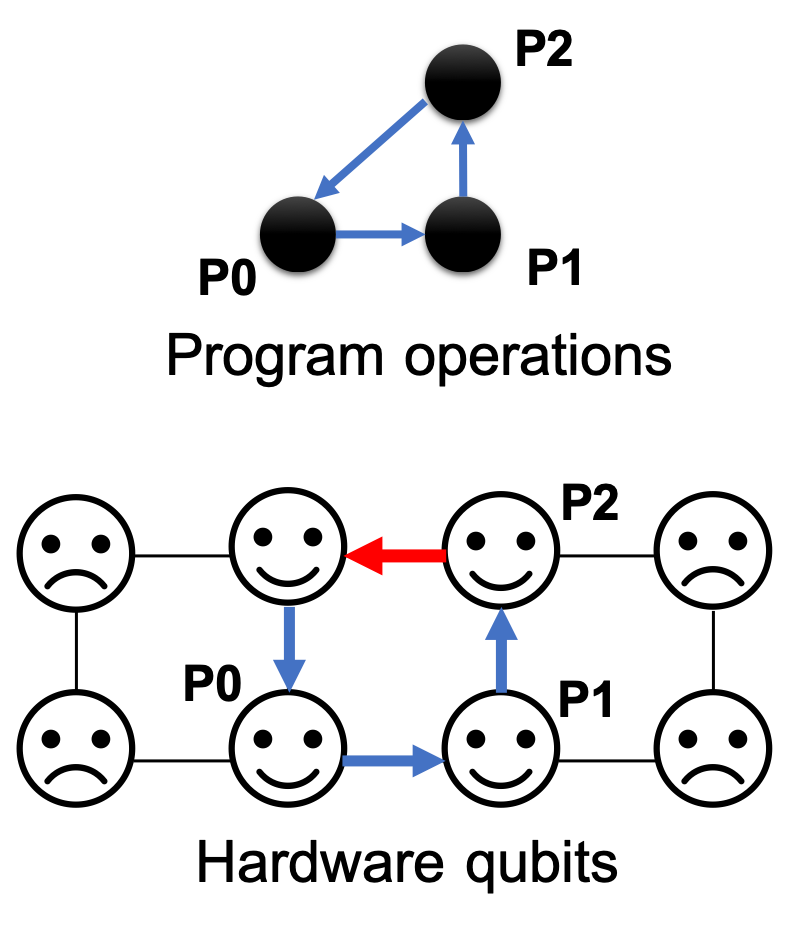

“If we want to run a program today, and our compiler chooses a hardware gate (operation) which has poor error rate, the program’s success rate dips dramatically,” said researcher Prakash Murali, a graduate student at Princeton University. “Instead, if we compile with awareness of this noise and run our programs using the best qubits and operations in the hardware, we can significantly boost the success rate.”

To exploit this idea of adapting program execution to hardware noise, the researchers developed a “noise-adaptive” compiler that utilizes detailed noise characterization data for the target hardware. Such noise data is routinely measured for IBM quantum systems as part of daily operation calibration and includes the error rates for each type of operation capable on the hardware. Leveraging this data, the compiler maps program qubits to hardware qubits that have low error rates and schedules gates quickly to reduce chances of state decay from decoherence. In addition, it also minimizes the number of communication operations and performs them using reliable hardware operations.

“When we run large-scale programs, we want the success rates to be high to be able to distinguish the right answer from noise and also to reduce the number of repeated runs required to obtain the answer,” emphasized Murali. “Our evaluation clearly demonstrates that noise-adaptivity is crucial for achieving the full potential of quantum systems.”