Intel Research Awards Go To Two UChicagoCS Faculty for Video Analytics, “Serverless” Cloud Projects

Two faculty members from the University of Chicago Department of Computer Science received research awards from Intel to study new approaches for analyzing video data and advance cloud computing resources to support real-time applications.

Sanjay Krishnan, assistant professor at UChicago CS, will use the Intel grant to create a new general-purpose system for storing, processing, and applying computer vision tools on video data, such as footage from stores, sports events, and traffic cameras. The work will build upon the DeepLens system he’s developing with assistant professor Aaron Elmore and graduate student Adam Dziedzic and Pranav Subramaniam.

Andrew Chien, the William Eckhardt Distinguished Service Professor at UChicago CS, will use Intel funding to accelerate a collaboration with assistant professor Junchen Jiang and graduate student Hai Duc Nguyen. The project studies how serverless cloud computing can be improved to better serve the real-time demands of edge computing applications such as video monitoring, traffic control, sensors and other novel “Internet of Things” applications.

A Search Engine for Video Data

Today, we are used to quickly searching through text or running calculations on stored data thanks to four decades of progress in relational databases. But performing the same tasks on video data remains much less convenient. A business owner who wants to analyze where customers spend time inside their store or a baseball analyst looking for all examples of a particular kind of play must often resort to manually combing through footage or, at best, developing a one-off computer vision solution.

The reason for this difficulty is that video data is stored, processed and encoded for human viewers watching Netflix, not for computers that can efficiently execute searches or analytic tasks. With DeepLens, Krishnan is exploring the potential of building a new system for “machine readable” video data, drawing upon the history of relational databases and adding in more recent innovations such as machine learning.

“We think that video analytics is where databases were in the 1970s, when companies were coming up with their own formats, their own interfaces, and their own optimizations on how to make things fast,” Krishnan said. “The standardization on the 'relational model', or the familiar organization of data into tables of rows and columns, was the key change that led to the development of interoperable software, the widespread adoption of such databases in practice, and allowed the research community to optimize database components in isolation. We want to catalyze the same change in video analytics but with the advantage of 40 years of technological hindsight on how to build performant data analytics systems.”

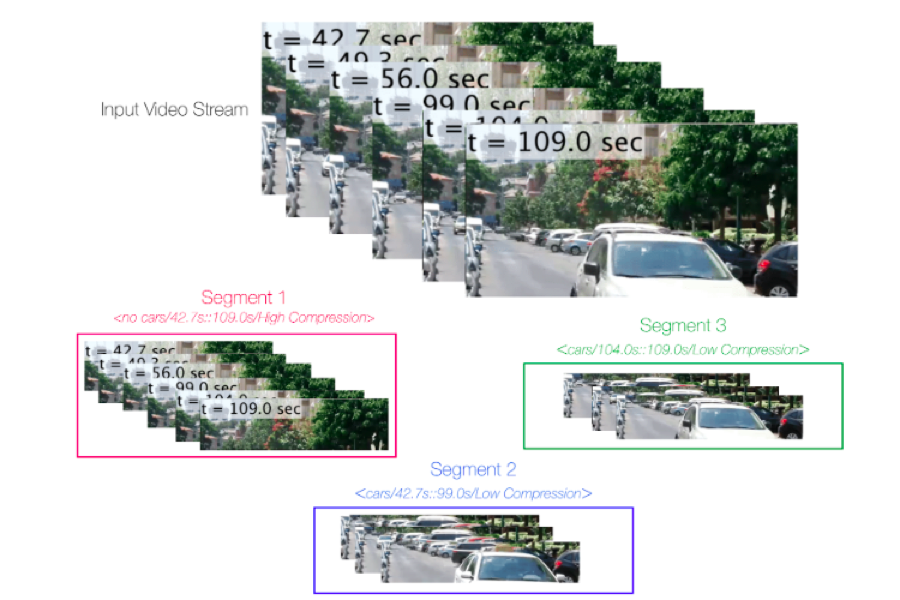

With the Intel project, Krishnan’s group will focus on the bottom level of this stack, exploring new file formats and how Intel’s storage manager works with DeepLens. The system will ingest video footage and cut it up into component parts that make it easier for subsequent analysis; for example, traffic footage may be divided into cars, bikes, and other vehicles, license plates, vehicle colors, or other identifying features, or by length of video or time of day. A subsequent database query for “red cars” or even how often a specific red car of interest appears in the data can then be executed much more quickly and efficiently.

“The whole goal is that exploring video or visual content should be just as easy as exploring documents or text with a search engine,” Krishnan said. “We're optimizing the system for analytics.”

Enabling the Serverless Edge

As the devices collecting this video and other constant streams of data proliferate, scientists and engineers have pursued the concept of “edge computing,” where data is processed and analyzed at the source of the recording before being acted upon or sent to long-term storage. Key advantages of “edge” are the potential for real-time actions, such as alerting emergency responders to an accident at an intersection, as well as bandwidth saving, where the device transmits only data of interest and trashes the rest.

But the “bursty” behavior of these edge computing applications is a good match for “serverless” cloud computing resources. Instead of buying expensive, dedicated servers to run applications, owners can rent “on demand” virtual servers from the data centers of Google, Amazon, and other vendors, paying only for what they use, when they use it. However, today’s serverless offerings provide no guarantees, and resources may not be provided immediately when demand unexpectedly surges, as would be expected for sensors and devices looking for rare events. Those delays could be long enough to make real-time analytics implausible.

To address this problem, Chien’s group will work with Intel to explore the concept of “guaranteed allocation rate” in serverless cloud computing for real-time platforms. Under this model, cloud providers guarantee the invocation rate of a serverless function — essentially promising to provide increased resources to an application within a certain timespan. The guarantee would permit real-time functions without requiring customers to reserve machines that will sit dormant for long stretches.

“What we've been able to figure out is, you can solve this quality of performance problem just by adding one little thing to serverless, which is this guaranteed allocation rate, or guaranteed invocation rate,” Chien said. “This one idea actually allows you to prove that you can deliver real-time performance for all these kinds of applications, and so it's the magic element that makes all of this whole new class of applications possible.”

The Intel funding will join a broader NSF-funded project creating the resource management techniques needed for cloud providers to offer a guaranteed allocation rate efficiently, and exploring how these approaches enable new, more efficient online applications in video analytics. The results could help advance not only cloud computing applications, but by enabling “killer apps,” accelerate the rollout of 5G wireless communication and hardware optimized for edge computing.

“Intel is interested in providing the best hardware support for these strong real-time guarantees and performance isolation,” Chien said. “This kind of support enables sharing — you can actually run multiple real-time applications without interference, and of course applications only pay for what they use! Intel is interested in the context of edge and 5G, because these real-time applications are a key driver.”