Computational Researchers Join Quest to Search Beyond the Higgs Boson

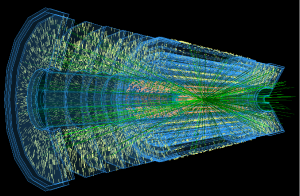

In 2026, the Large Hadron Collider at the CERN laboratory in Geneva, Switzerland will begin its next phase, probing the mysteries of the universe through collisions of its most intense beams to date. But each year, the High-Luminosity LHC (HL-LHC) will generate billions of gigabytes of data—up to 100 times more data than was required to discover the Higgs boson.

Meeting those immense data demands and enabling new discoveries will require innovative software and computing approaches for data analysis, organization and management. The new $25 million Institute for Research and Innovation in Software for High-Energy Physics, or IRIS-HEP, will meet these challenges by gathering researchers from 17 U.S. universities, including the University of Chicago.

Driven primarily by the HL-LHC, over the next five years the National Science Foundation-funded IRIS-HEP will be an active center for software R&D in areas of innovative algorithms, data analysis platforms, and data organization, management and access systems. Led by Princeton University, the group includes researchers from UChicago’s Enrico Fermi Institute and Department of Computer Science that will help create the tools needed to fulfill the scientific potential of these unprecedented experiments.

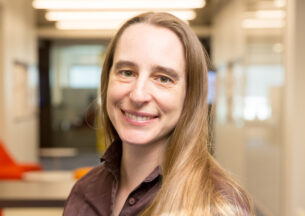

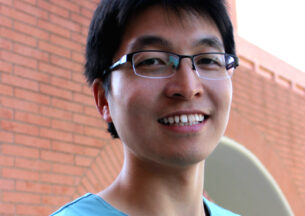

From UChicago CS, Asst. Prof. Aaron Elmore will contribute to the group’s Data Organization, Management and Access team, developing new ways to organize and deliver HL-LHC data to diverse analysis platforms around the world. Andrew A. Chien, the William Eckhardt Distinguished Service Professor in Computer Science, will explore distributed techniques to scale analyses and infrastructure, particularly studying novel hardware accelerators that transform data for efficient transmission and analysis.

“We’re not trying to reduce the number of bits transported across the networks, but to maximize the information content of each bit, and thereby multiply the amount of science the physics community can do by ten or even one hundred times,” Chien said. “By filtering down to the right data and choosing efficient representations, the research analysis can scale up, even for each specific network, storage or compute capability in the LHC computing system.”

For more on IRIS-HEP, visit UChicago News.