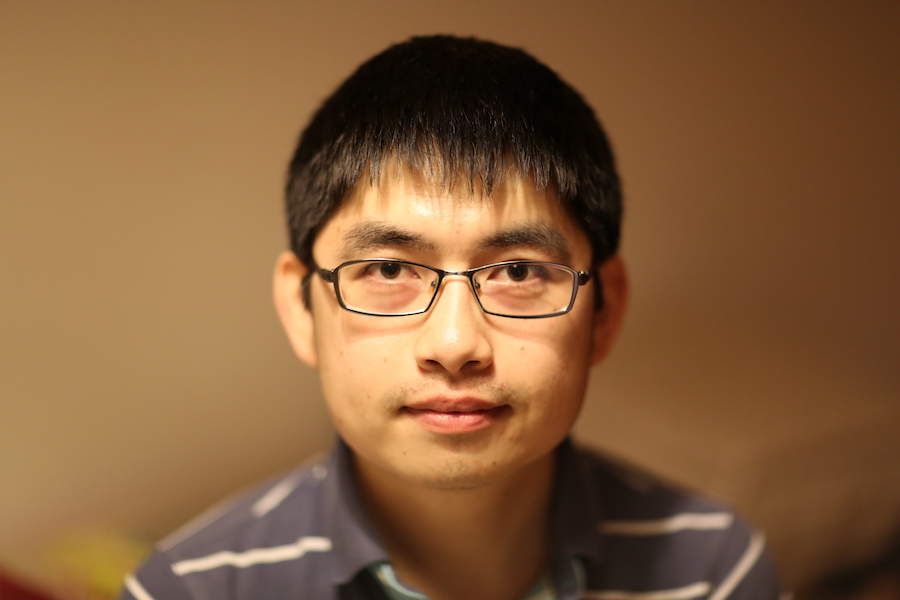

New UChicago CS Asst. Prof. Yuxin Chen Turns AI Into an Active Learner and Teacher

The extremely simplified explanation for machine learning is kind of like a giant funnel — a researcher pours a lot of data in, and a predictive model comes out. The machine learning model, in this metaphor, plays a largely passive role, simply taking the data it is given and determining the best way to use it. But in order to fully unleash the potential of artificial intelligence for technology and science, researchers are studying new approaches that make these models a more active collaborator, both as an eager student and a responsive teacher.

Yuxin Chen, who joined UChicago CS this fall as an assistant professor, has developed and applied many forms of this “interactive machine learning.” In his previous work at Caltech, he worked with computational biologists, neuroscientists, mechanical engineers, and physicists on new algorithms for discovery and analysis. Together, they built new models that not only learn from the original data they are fed, but continually seek out new data to further improve their performance.

“One very fundamental question is, how can we design a system that can actively gather information, especially when facing a large volume of data?,” Chen said. “Interactive machine learning is a regime where the machine actually interacts with people and the environment, trying to adapt and gather useful information to learn for itself.”

Machine learning models are basically decision-making machines, using known examples to answer a question — such as, is this a photo of a cat or a dog? — with some level of uncertainty. With interactive machine learning, when a model is uncertain about its answer, it can request more data, either from a human or a remote database, that would help raise its confidence. Think of a robot navigating an unfamiliar environment, Chen said, and constantly gathering new information to avoid running into people passing by or falling down the stairs.

Chen has expanded this principle in several domains. For example, he has helped researchers (including Nobelist Frances Arnold’s research group) designing new proteins or nanostructures search through the vast space of biochemical or physical configurations. The interactive machine learning algorithm can not only ingest existing data and predict which configurations are likely to work best, but it can also recommend specific experiments that would produce the new data it needs to make even better predictions.

In another project, he worked with ecologists studying biodiversity conservation by examining drone photos of the rainforest with the naked eye. Drawing upon computer vision methods, Chen built a model that combs through the photos for potential areas of interest, such as orangutan nests, and then queries a human observer for a ruling about whether it was a successful identification. As more data is verified or rejected by the human experts, it further trains the model to get better at finding nests on its own.

As Chen develops interactive models for these applications, he’s also generating new insights on the theoretical foundations of machine learning, which can be subsequently generalized for many different uses.

“In these collaborations, I ask: Can we solve such interactive machine learning problems in a more intelligent way that’s less time consuming and less costly?,” Chen said. “We want to use these human-machine interactions, but also minimize them over time. The beauty of the theoretical work is, once you have a general theory on how information flows through the interactions, you can actually apply it to a lot of problems.”

This research interest has also led Chen into the related field of “machine teaching.” At its most literal, machine teaching uses the methods of machine learning for automated curriculum design, using the responses of students in quizzes or tests to adjust how they are subsequently taught. Chen has co-developed apps using this approach for teaching the German language or identifying bird species, but also considers the principles of machine teaching to be important for “explainable AI,” systems that don’t just make decisions in a black box but tell users how they arrived at the conclusion.

“If you actually think about optimal decision making with a human in the loop, the machine needs to communicate in a way that could be understandable by a human expert or a human agent,” Chen said. “In order to explain the concept well, one obvious way is to teach it. We call this dynamic interpretability. We’re not trying to explain to users the structure or technical details of the model directly, but rather, to explain the model via teaching examples.”

At UChicago, Chen hopes to build new collaborations. Indeed, one of the main attractions of moving to the university was its close relationship with two national laboratories, Argonne and Fermilab, as well as opportunities to work with domain experts across campus. Chen even started one collaboration before his arrival — a project with Fermilab’s Brian Nord to create a “self-driving telescope” for cosmic surveys.

“There are definitely a lot of exciting places in computer science, but what's unique for Chicago is the scope of growth. This department feels like an academic startup with numerous opportunities for collaboration,” Chen said. “UChicago’s unique strengths in the physical sciences, its energetic and collegial atmosphere, and the dramatically expanding CS department make it one of the best places for these intimate, multidisciplinary collaborations.”